Part IB, 2004

Part IB, 2004

Jump to course

State and prove the contraction mapping theorem.

Let be a positive real number, and take . Prove that the function

is a contraction from to . Find the unique fixed point of .

1.I.4G

Define what it means for a sequence of functions , where , to converge uniformly to a function .

For each of the following sequences of functions on , find the pointwise limit function. Which of these sequences converge uniformly? Justify your answers.

(i)

(ii)

(iii)

1.II.15G

State the axioms for a norm on a vector space. Show that the usual Euclidean norm on ,

satisfies these axioms.

Let be any bounded convex open subset of that contains 0 and such that if then . Show that there is a norm on , satisfying the axioms, for which is the set of points in of norm less than 1 .

2.I.3G

Consider a sequence of continuous functions . Suppose that the functions converge uniformly to some continuous function . Show that the integrals converge to .

Give an example to show that, even if the functions and are differentiable, the derivatives need not converge to .

2.II.14G

Let be a non-empty complete metric space. Give an example to show that the intersection of a descending sequence of non-empty closed subsets of , can be empty. Show that if we also assume that

then the intersection is not empty. Here the diameter is defined as the supremum of the distances between any two points of a set .

We say that a subset of is dense if it has nonempty intersection with every nonempty open subset of . Let be any sequence of dense open subsets of . Show that the intersection is not empty.

[Hint: Look for a descending sequence of subsets , with , such that the previous part of this problem applies.]

3.I.4F

Let and be metric spaces with metrics and . If and are any two points of , prove that the formula

defines a metric on . If , prove that the diagonal of is closed in .

4.I.3F

Let be open sets in , respectively, and let be a map. What does it mean for to be differentiable at a point of ?

Let be the map given by

Prove that is differentiable at all points with .

4.II.13F

State the inverse function theorem for maps , where is a non-empty open subset of .

Let be the function defined by

Find a non-empty open subset of such that is locally invertible on , and compute the derivative of the local inverse.

Let be the set of all points in satisfying

Prove that is locally invertible at all points of except and . Deduce that, for each point in except and , there exist open intervals containing , respectively, such that for each in , there is a unique point in with in .

1.I.5A

Determine the poles of the following functions and calculate their residues there. (i) , (ii) , (iii) .

1.II.16A

Let and be two polynomials such that

where are distinct non-real complex numbers and . Using contour integration, determine

carefully justifying all steps.

2.I.5A

Let the functions and be analytic in an open, nonempty domain and assume that there. Prove that if in then there exists such that .

2.II.16A

Prove by using the Cauchy theorem that if is analytic in the open disc then there exists a function , analytic in , such that , .

4.I.5A

State and prove the Parseval formula.

[You may use without proof properties of convolution, as long as they are precisely stated.]

4.II.15A

(i) Show that the inverse Fourier transform of the function

is

(ii) Determine, by using Fourier transforms, the solution of the Laplace equation

given in the strip , together with the boundary conditions

where has been given above.

[You may use without proof properties of Fourier transforms.]

1.I.7B

Write down Maxwell's equations and show that they imply the conservation of charge.

In a conducting medium of conductivity , where , show that any charge density decays in time exponentially at a rate to be determined.

1.II.18B

Inside a volume there is an electrostatic charge density , which induces an electric field with associated electrostatic potential . The potential vanishes on the boundary of . The electrostatic energy is

Derive the alternative form

A capacitor consists of three identical and parallel thin metal circular plates of area positioned in the planes and , with , with centres on the axis, and at potentials and 0 respectively. Find the electrostatic energy stored, verifying that expressions (1) and (2) give the same results. Why is the energy minimal when ?

2.I.7B

Write down the two Maxwell equations that govern steady magnetic fields. Show that the boundary conditions satisfied by the magnetic field on either side of a sheet carrying a surface current of density , with normal to the sheet, are

Write down the force per unit area on the surface current.

2.II.18B

The vector potential due to a steady current density is given by

where you may assume that extends only over a finite region of space. Use to derive the Biot-Savart law

A circular loop of wire of radius carries a current . Take Cartesian coordinates with the origin at the centre of the loop and the -axis normal to the loop. Use the BiotSavart law to show that on the -axis the magnetic field is in the axial direction and of magnitude

3.I.7B

A wire is bent into the shape of three sides of a rectangle and is held fixed in the plane, with base and , and with arms and . A second wire moves smoothly along the arms: and with . The two wires have resistance per unit length and mass per unit length. There is a time-varying magnetic field in the -direction.

Using the law of induction, find the electromotive force around the circuit made by the two wires.

Using the Lorentz force, derive the equation

3.II.19B

Starting from Maxwell's equations, derive the law of energy conservation in the form

where and .

Evaluate and for the plane electromagnetic wave in vacuum

where the relationships between and should be determined. Show that the electromagnetic energy propagates at speed , i.e. show that .

1.I.9C

From the general mass-conservation equation, show that the velocity field of an incompressible fluid is solenoidal, i.e. that .

Verify that the two-dimensional flow

is solenoidal and find a streamfunction such that .

1.II.20C

A layer of water of depth flows along a wide channel with uniform velocity , in Cartesian coordinates , with measured downstream. The bottom of the channel is at , and the free surface of the water is at . Waves are generated on the free surface so that it has the new position .

Write down the equation and the full nonlinear boundary conditions for the velocity potential (for the perturbation velocity) and the motion of the free surface.

By linearizing these equations about the state of uniform flow, show that

where is the acceleration due to gravity.

Hence, determine the dispersion relation for small-amplitude surface waves

3.I.10C

State Bernoulli's equation for unsteady motion of an irrotational, incompressible, inviscid fluid subject to a conservative body force .

A long vertical U-tube of uniform cross section contains an inviscid, incompressible fluid whose surface, in equilibrium, is at height above the base. Derive the equation

governing the displacement of the surface on one side of the U-tube, where is time and is the acceleration due to gravity.

3.II.21C

Use separation of variables to determine the irrotational, incompressible flow

around a solid sphere of radius translating at velocity along the direction in spherical polar coordinates and .

Show that the total kinetic energy of the fluid is

where is the mass of fluid displaced by the sphere.

A heavy sphere of mass is released from rest in an inviscid fluid. Determine its speed after it has fallen through a distance in terms of and .

4.I.8C

Write down the vorticity equation for the unsteady flow of an incompressible, inviscid fluid with no body forces acting.

Show that the flow field

has uniform vorticity of magnitude for some constant .

4.II.18C

Use Euler's equation to derive the momentum integral

for the steady flow and pressure of an inviscid,incompressible fluid of density , where is a closed surface with normal .

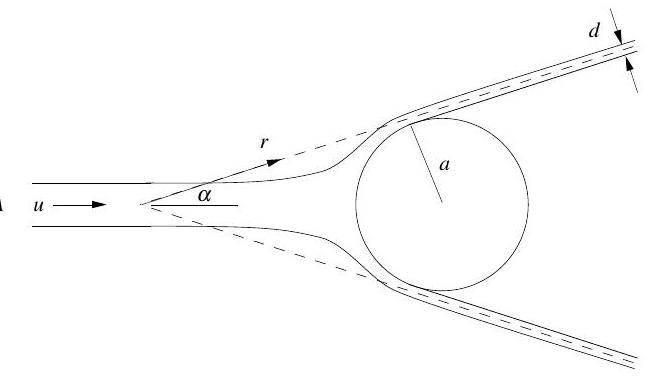

A cylindrical jet of water of area and speed impinges axisymmetrically on a stationary sphere of radius and is deflected into a conical sheet of vertex angle as shown. Gravity is being ignored.

Use a suitable form of Bernoulli's equation to determine the speed of the water in the conical sheet, being careful to state how the equation is being applied.

Use conservation of mass to show that the width of the sheet far from the point of impact is given by

where is the distance along the sheet measured from the vertex of the cone.

Finally, use the momentum integral to determine the net force on the sphere in terms of and .

Let be the topology on consisting of the empty set and all sets such that is finite. Let be the usual topology on , and let be the topology on consisting of the empty set and all sets of the form for some real .

(i) Prove that all continuous functions are constant.

(ii) Give an example with proof of a non-constant function that is continuous.

(i) Explain why the formula

defines a function that is analytic on the domain . [You need not give full details, but should indicate what results are used.]

Show also that for every such that is defined.

(ii) Write for whenever with and . Let be defined by the formula

Prove that is analytic on .

[Hint: What would be the effect of redefining to be when , and ?]

(iii) Determine the nature of the singularity of at .

2.II.15E

(i) Let be the set of all infinite sequences such that for all . Let be the collection of all subsets such that, for every there exists such that whenever . Prove that is a topology on .

(ii) Let a distance be defined on by

Prove that is a metric and that the topology arising from is the same as .

3.I.5E

Let be the contour that goes once round the boundary of the square

in an anticlockwise direction. What is ? Briefly justify your answer.

Explain why the integrals along each of the four edges of the square are equal.

Deduce that .

4.I.4E

(i) Let be the open unit disc of radius 1 about the point . Prove that there is an analytic function such that for every .

(ii) Let , Re . Explain briefly why there is at most one extension of to a function that is analytic on .

(iii) Deduce that cannot be extended to an analytic function on .

4.II.14E

(i) State and prove Rouché's theorem.

[You may assume the principle of the argument.]

(ii) Let . Prove that the polynomial has three roots with modulus less than 3. Prove that one root satisfies ; another, , satisfies , Im ; and the third, , has .

(iii) For sufficiently small , prove that .

[You may use results from the course if you state them precisely.]

1.I.3G

Using the Riemannian metric

define the length of a curve and the area of a region in the upper half-plane .

Find the hyperbolic area of the region .

1.II.14G

Show that for every hyperbolic line in the hyperbolic plane there is an isometry of which is the identity on but not on all of . Call it the reflection .

Show that every isometry of is a composition of reflections.

3.I.3G

State Euler's formula for a convex polyhedron with faces, edges, and vertices.

Show that any regular polyhedron whose faces are pentagons has the same number of vertices, edges and faces as the dodecahedron.

3.II.15G

Let be the lengths of a right-angled triangle in spherical geometry, where is the hypotenuse. Prove the Pythagorean theorem for spherical geometry in the form

Now consider such a spherical triangle with the sides replaced by for a positive number . Show that the above formula approaches the usual Pythagorean theorem as approaches zero.

Let be a finite group of order . Let be a subgroup of . Define the normalizer of , and prove that the number of distinct conjugates of is equal to the index of in . If is a prime dividing , deduce that the number of Sylow -subgroups of must divide .

[You may assume the existence and conjugacy of Sylow subgroups.]

Prove that any group of order 72 must have either 1 or 4 Sylow 3-subgroups.

Let be the group consisting of 3-dimensional row vectors with integer components. Let be the subgroup of generated by the three vectors

(i) What is the index of in ?

(ii) Prove that is not a direct summand of .

(iii) Is the subgroup generated by and a direct summand of ?

(iv) What is the structure of the quotient group ?

Let be the subring of all in of the form

where and are in and . Prove that is a non-negative element of , for all in . Prove that the multiplicative group of units of has order 6 . Prove that is the intersection of two prime ideals of .

[You may assume that is a unique factorization domain.]

1.II.13F

State the structure theorem for finitely generated abelian groups. Prove that a finitely generated abelian group is finite if and only if there exists a prime such that .

Show that there exist abelian groups such that for all primes . Prove directly that your example of such an is not finitely generated.

2.I.2F

Prove that the alternating group is simple.

2.II.13F

Let be a subgroup of a group . Prove that is normal if and only if there is a group and a homomorphism such that

Let be the group of all matrices with in and . Let be a prime number, and take to be the subset of consisting of all with and Prove that is a normal subgroup of

4.I.2F

State Gauss's lemma and Eisenstein's irreducibility criterion. Prove that the following polynomials are irreducible in :

(i) ;

(ii) ;

(iii) , where is any prime number.

4.II.12F

Answer the following questions, fully justifying your answer in each case.

(i) Give an example of a ring in which some non-zero prime ideal is not maximal.

(ii) Prove that is not a principal ideal domain.

(iii) Does there exist a field such that the polynomial is irreducible in ?

(iv) Is the ring an integral domain?

(v) Determine all ring homomorphisms .

1.I.1H

Suppose that is a linearly independent set of distinct elements of a vector space and spans . Prove that may be reordered, as necessary, so that spans .

Suppose that is a linearly independent set of distinct elements of and that spans . Show that .

1.II.12H

Let and be subspaces of the finite-dimensional vector space . Prove that both the sum and the intersection are subspaces of . Prove further that

Let be the kernels of the maps given by the matrices and respectively, where

Find a basis for the intersection , and extend this first to a basis of , and then to a basis of .

2.I.1E

For each let be the matrix defined by

What is Justify your answer.

[It may be helpful to look at the cases before tackling the general case.]

2.II.12E

Let be a quadratic form on a real vector space of dimension . Prove that there is a basis with respect to which is given by the formula

Prove that the numbers and are uniquely determined by the form . By means of an example, show that the subspaces and need not be uniquely determined by .

3.I.1E

Let be a finite-dimensional vector space over . What is the dual space of ? Prove that the dimension of the dual space is the same as that of .

3.II.13E

(i) Let be an -dimensional vector space over and let be an endomorphism. Suppose that the characteristic polynomial of is , where the are distinct and for every .

Describe all possibilities for the minimal polynomial and prove that there are no further ones.

(ii) Give an example of a matrix for which both the characteristic and the minimal polynomial are .

(iii) Give an example of two matrices with the same rank and the same minimal and characteristic polynomials such that there is no invertible matrix with .

4.I.1E

Let be a real -dimensional inner-product space and let be a dimensional subspace. Let be an orthonormal basis for . In terms of this basis, give a formula for the orthogonal projection .

Let . Prove that is the closest point in to .

[You may assume that the sequence can be extended to an orthonormal basis of .]

4.II.11E

(i) Let be an -dimensional inner-product space over and let be a Hermitian linear map. Prove that has an orthonormal basis consisting of eigenvectors of .

(ii) Let be another Hermitian map. Prove that is Hermitian if and only if .

(iii) A Hermitian map is positive-definite if for every non-zero vector . If is a positive-definite Hermitian map, prove that there is a unique positivedefinite Hermitian map such that .

1.I.11H

Let be a transition matrix. What does it mean to say that is (a) irreducible, recurrent?

Suppose that is irreducible and recurrent and that the state space contains at least two states. Define a new transition matrix by

Prove that is also irreducible and recurrent.

1.II.22H

Consider the Markov chain with state space and transition matrix

Determine the communicating classes of the chain, and for each class indicate whether it is open or closed.

Suppose that the chain starts in state 2 ; determine the probability that it ever reaches state 6 .

Suppose that the chain starts in state 3 ; determine the probability that it is in state 6 after exactly transitions, .

2.I.11H

Let be an irreducible, positive-recurrent Markov chain on the state space with transition matrix and initial distribution , where is the unique invariant distribution. What does it mean to say that the Markov chain is reversible?

Prove that the Markov chain is reversible if and only if for all .

2.II.22H

Consider a Markov chain on the state space with transition probabilities as illustrated in the diagram below, where and .

For each value of , determine whether the chain is transient, null recurrent or positive recurrent.

When the chain is positive recurrent, calculate the invariant distribution.

1.I.6B

Write down the general isotropic tensors of rank 2 and 3 .

According to a theory of magnetostriction, the mechanical stress described by a second-rank symmetric tensor is induced by the magnetic field vector . The stress is linear in the magnetic field,

where is a third-rank tensor which depends only on the material. Show that can be non-zero only in anisotropic materials.

1.II.17B

The equation governing small amplitude waves on a string can be written as

The end points and are fixed at . At , the string is held stationary in the waveform,

The string is then released. Find in the subsequent motion.

Given that the energy

is constant in time, show that

2.I.6B

Write down the general form of the solution in polar coordinates to Laplace's equation in two dimensions.

Solve Laplace's equation for in and in , subject to the conditions

2.II.17B

Let be the moment-of-inertia tensor of a rigid body relative to the point . If is the centre of mass of the body and the vector has components , show that

where is the mass of the body.

Consider a cube of uniform density and side , with centre at the origin. Find the inertia tensor about the centre of mass, and thence about the corner .

Find the eigenvectors and eigenvalues of .

3.I.6D

Let

For any variation with , show that when with

By using integration by parts, show that

3.II.18D

Starting from the Euler-Lagrange equations, show that the condition for the variation of the integral to be stationary is

In a medium with speed of light the ray path taken by a light signal between two points satisfies the condition that the time taken is stationary. Consider the region and suppose . Derive the equation for the light ray path . Obtain the solution of this equation and show that the light ray between and is given by

if .

Sketch the path for close to and evaluate the time taken for a light signal between these points.

[The substitution , for some constant , should prove useful in solving the differential equation.]

4.I.6C

Chebyshev polynomials satisfy the differential equation

where is an integer.

Recast this equation into Sturm-Liouville form and hence write down the orthogonality relationship between and for .

By writing , or otherwise, show that the polynomial solutions of ( ) are proportional to .

4.II.16C

Obtain the Green function satisfying

where is real, subject to the boundary conditions

[Hint: You may find the substitution helpful.]

Use the Green function to determine that the solution of the differential equation

subject to the boundary conditions

is

2.I.9A

Determine the coefficients of Gaussian quadrature for the evaluation of the integral

that uses two function evaluations.

2.II.20A

Given an matrix and , prove that the vector is the solution of the least-squares problem for if and only if . Let

Determine the solution of the least-squares problem for .

3.I.11A

The linear system

where real and are given, is solved by the iterative procedure

Determine the conditions on that guarantee convergence.

3.II.22A

Given , we approximate by the linear combination

By finding the Peano kernel, determine the least constant such that

3.I.12G

Consider the two-person zero-sum game Rock, Scissors, Paper. That is, a player gets 1 point by playing Rock when the other player chooses Scissors, or by playing Scissors against Paper, or Paper against Rock; the losing player gets point. Zero points are received if both players make the same move.

Suppose player one chooses Rock and Scissors (but never Paper) with probabilities and . Write down the maximization problem for player two's optimal strategy. Determine the optimal strategy for each value of .

3.II.23G

Consider the following linear programming problem:

Write down the Phase One problem in this case, and solve it.

By using the solution of the Phase One problem as an initial basic feasible solution for the Phase Two simplex algorithm, solve the above maximization problem. That is, find the optimal tableau and read the optimal solution and optimal value from it.

4.I.10G

State and prove the max flow/min cut theorem. In your answer you should define clearly the following terms: flow; maximal flow; cut; capacity.

4.II.20G

For any number , find the minimum and maximum values of

subject to . Find all the points at which the minimum and maximum are attained. Justify your answer.

1.I.8D

From the time-dependent Schrödinger equation for , derive the equation

for and some suitable .

Show that is a solution of the time-dependent Schrödinger equation with zero potential for suitable and calculate and . What is the interpretation of this solution?

1.II.19D

The angular momentum operators are . Write down their commutation relations and show that . Let

and show that

Verify that , where , for any function . Show that

for any integer . Show that is an eigenfunction of and determine its eigenvalue. Why must be an eigenfunction of ? What is its eigenvalue?

2.I.8D

A quantum mechanical system is described by vectors . The energy eigenvectors are

with energies respectively. The system is in the state at time . What is the probability of finding it in the state at a later time

2.II.19D

Consider a Hamiltonian of the form

where is a real function. Show that this can be written in the form , for some real to be determined. Show that there is a wave function , satisfying a first-order equation, such that . If is a polynomial of degree , show that must be odd in order for to be normalisable. By considering show that all energy eigenvalues other than that for must be positive.

For , use these results to find the lowest energy and corresponding wave function for the harmonic oscillator Hamiltonian

3.I

Write down the expressions for the classical energy and angular momentum for an electron in a hydrogen atom. In the Bohr model the angular momentum is quantised so that

for integer . Assuming circular orbits, show that the radius of the 'th orbit is

and determine . Show that the corresponding energy is then

3.II.20D

A one-dimensional system has the potential

For energy , the wave function has the form

By considering the relation between incoming and outgoing waves explain why we should expect

Find four linear relations between . Eliminate and show that

where and . By using the result for , or otherwise, explain why the solution for is

For define the transmission coefficient and show that, for large ,

3.I

Write down the Lorentz transformation with one space dimension between two inertial frames and moving relatively to one another at speed .

A particle moves at velocity in frame . Find its velocity in frame and show that is always less than .

4.I.7D

For a particle with energy and momentum , explain why an observer moving in the -direction with velocity would find

where . What is the relation between and for a photon? Show that the same relation holds for and and that

What happens for ?

4.II.17D

State how the 4 -momentum of a particle is related to its energy and 3momentum. How is related to the particle mass? For two particles with 4 -momenta and find a Lorentz-invariant expression that gives the total energy in their centre of mass frame.

A photon strikes an electron at rest. What is the minimum energy it must have in order for it to create an electron and positron, of the same mass as the electron, in addition to the original electron? Express the result in units of .

[It may be helpful to consider the minimum necessary energy in the centre of mass frame.]

1.I.10H

Use the generalized likelihood-ratio test to derive Student's -test for the equality of the means of two populations. You should explain carefully the assumptions underlying the test.

1.II.21H

State and prove the Rao-Blackwell Theorem.

Suppose that are independent, identically-distributed random variables with distribution

where , is an unknown parameter. Determine a one-dimensional sufficient statistic, , for .

By first finding a simple unbiased estimate for , or otherwise, determine an unbiased estimate for which is a function of .

2.I.10H

A study of 60 men and 90 women classified each individual according to eye colour to produce the figures below.

\begin{tabular}{|c|c|c|c|} \cline { 2 - 4 } \multicolumn{1}{c|}{} & Blue & Brown & Green \ \hline Men & 20 & 20 & 20 \ \hline Women & 20 & 50 & 20 \ \hline \end{tabular}

Explain how you would analyse these results. You should indicate carefully any underlying assumptions that you are making.

A further study took 150 individuals and classified them both by eye colour and by whether they were left or right handed to produce the following table.

\begin{tabular}{|c|c|c|c|} \cline { 2 - 4 } \multicolumn{1}{c|}{} & Blue & Brown & Green \ \hline Left Handed & 20 & 20 & 20 \ \hline Right Handed & 20 & 50 & 20 \ \hline \end{tabular}

How would your analysis change? You should again set out your underlying assumptions carefully.

[You may wish to note the following percentiles of the distribution.

2.II.21H

Defining carefully the terminology that you use, state and prove the NeymanPearson Lemma.

Let be a single observation from the distribution with density function

for an unknown real parameter . Find the best test of size , of the hypothesis against , where .

When , for which values of and will the power of the best test be at least ?

4.I

Suppose that are independent random variables, with having the normal distribution with mean and variance ; here are unknown and are known constants.

Derive the least-squares estimate of .

Explain carefully how to test the hypothesis against .

4.II.19H

It is required to estimate the unknown parameter after observing , a single random variable with probability density function ; the parameter has the prior distribution with density and the loss function is . Show that the optimal Bayesian point estimate minimizes the posterior expected loss.

Suppose now that and , where is known. Determine the posterior distribution of given .

Determine the optimal Bayesian point estimate of in the cases when

(i) , and

(ii) .