Part IB, 2021, Paper 1

Part IB, 2021, Paper 1

Jump to course

Paper 1, Section II, F

Let be a map between metric spaces. Prove that the following two statements are equivalent:

(i) is open whenever is open.

(ii) for any sequence .

For as above, determine which of the following statements are always true and which may be false, giving a proof or a counterexample as appropriate.

(a) If is compact and is continuous, then is uniformly continuous.

(b) If is compact and is continuous, then is compact.

(c) If is connected, is continuous and is dense in , then is connected.

(d) If the set is closed in and is compact, then is continuous.

Paper 1, Section I, B

Let , and let denote the positively oriented circle of radius centred at the origin. Define

Evaluate for .

Paper 1, Section II, G

(a) State a theorem establishing Laurent series of analytic functions on suitable domains. Give a formula for the Laurent coefficient.

Define the notion of isolated singularity. State the classification of an isolated singularity in terms of Laurent coefficients.

Compute the Laurent series of

on the annuli and . Using this example, comment on the statement that Laurent coefficients are unique. Classify the singularity of at 0 .

(b) Let be an open subset of the complex plane, let and let . Assume that is an analytic function on with as . By considering the Laurent series of at , classify the singularity of at in terms of the Laurent coefficients. [You may assume that a continuous function on that is analytic on is analytic on .]

Now let be an entire function with as . By considering Laurent series at 0 of and of , show that is a polynomial.

(c) Classify, giving reasons, the singularity at the origin of each of the following functions and in each case compute the residue:

Paper 1, Section II, 15D

(a) Show that the magnetic flux passing through a simple, closed curve can be written as

where is the magnetic vector potential. Explain why this integral is independent of the choice of gauge.

(b) Show that the magnetic vector potential due to a static electric current density , in the Coulomb gauge, satisfies Poisson's equation

Hence obtain an expression for the magnetic vector potential due to a static, thin wire, in the form of a simple, closed curve , that carries an electric current . [You may assume that the electric current density of the wire can be written as

where is the three-dimensional Dirac delta function.]

(c) Consider two thin wires, in the form of simple, closed curves and , that carry electric currents and , respectively. Let (where ) be the magnetic flux passing through the curve due to the current flowing around . The inductances are defined by . By combining the results of parts (a) and (b), or otherwise, derive Neumann's formula for the mutual inductance,

Suppose that is a circular loop of radius , centred at and lying in the plane , and that is a different circular loop of radius , centred at and lying in the plane . Show that the mutual inductance of the two loops is

where

and the function is defined, for , by the integral

Paper 1, Section II, A

A two-dimensional flow is given by a velocity potential

where is a constant.

(a) Find the corresponding velocity field . Determine .

(b) The time-average of a quantity is defined as

Show that the time-average of this velocity field is zero everywhere. Write down an expression for the acceleration of fluid particles, and find the time-average of this expression at a fixed point .

(c) Now assume that . The material particle at at is marked with dye. Write down equations for its subsequent motion. Verify that its position for is given (correct to terms of order ) by

Deduce the time-average velocity of the dyed particle correct to this order.

Paper 1, Section I, F

Let be a smooth function and let (assumed not empty). Show that if the differential for all , then is a smooth surface in .

Is a smooth surface? Is every surface of the form for some smooth ? Justify your answers.

Paper 1, Section II, F

Let be an oriented surface. Define the Gauss map and show that the differential of the Gauss map at any point is a self-adjoint linear map. Define the Gauss curvature and compute in a given parametrisation.

A point is called umbilic if has a repeated eigenvalue. Let be a surface such that every point is umbilic and there is a parametrisation such that . Prove that is part of a plane or part of a sphere. Hint: consider the symmetry of the mixed partial derivatives , where for

Paper 1, Section II, G

Show that a ring is Noetherian if and only if every ideal of is finitely generated. Show that if is a surjective ring homomorphism and is Noetherian, then is Noetherian.

State and prove Hilbert's Basis Theorem.

Let . Is Noetherian? Justify your answer.

Give, with proof, an example of a Unique Factorization Domain that is not Noetherian.

Let be the ring of continuous functions . Is Noetherian? Justify your answer.

Paper 1, Section I,

Let be a vector space over , and let , symmetric bilinear form on .

Let . Show that is of dimension and . Show that if is a subspace with , then the restriction of , is nondegenerate.

Conclude that the dimension of is even.

Paper 1, Section II, E

Let , and let .

(a) (i) Compute , for all .

(ii) Hence, or otherwise, compute , for all .

(b) Let be a finite-dimensional vector space over , and let . Suppose for some .

(i) Determine the possible eigenvalues of .

(ii) What are the possible Jordan blocks of ?

(iii) Show that if , there exists a decomposition

where , and .

Paper 1, Section II, 19H

Let be a Markov chain with transition matrix . What is a stopping time of ? What is the strong Markov property?

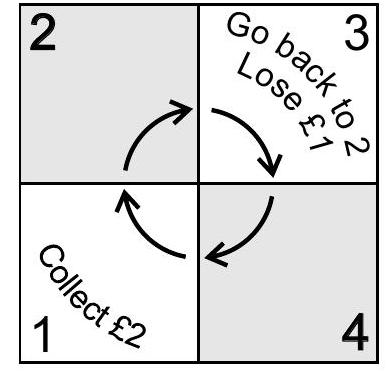

The exciting game of 'Unopoly' is played by a single player on a board of 4 squares. The player starts with (where ). During each turn, the player tosses a fair coin and moves one or two places in a clockwise direction according to whether the coin lands heads or tails respectively. The player collects each time they pass (or land on) square 1. If the player lands on square 3 however, they immediately lose and go back to square 2. The game continues indefinitely unless the player is on square 2 with , in which case the player loses the game and the game ends.

(a) By setting up an appropriate Markov chain, show that if the player is at square 2 with , where , the probability that they are ever at square 2 with is

(b) Find the probability of losing the game when the player starts on square 1 with , where .

[Hint: Take the state space of your Markov chain to be .]

Paper 1, Section II, C

(a) By introducing the variables and (where is a constant), derive d'Alembert's solution of the initial value problem for the wave equation:

where and and are given functions (and subscripts denote partial derivatives).

(b) Consider the forced wave equation with homogeneous initial conditions:

where and is a given function. You may assume that the solution is given by

For the forced wave equation , now in the half space (and with as before), find (in terms of ) the solution for that satisfies the (inhomogeneous) initial conditions

and the boundary condition for .

Paper 1, Section I, B

Prove, from first principles, that there is an algorithm that can determine whether any real symmetric matrix is positive definite or not, with the computational cost (number of arithmetic operations) bounded by .

[Hint: Consider the LDL decomposition.]

Paper 1, Section II, B

For the ordinary differential equation

where and the function is analytic, consider an explicit one-step method described as the mapping

Here and with time step , producing numerical approximations to the exact solution of equation , with being the initial value of the numerical solution.

(i) Define the local error of a one-step method.

(ii) Let be a norm on and suppose that

for all , where is some positive constant. Let be given and denote the initial error (potentially non-zero). Show that if the local error of the one-step method ( ) is , then

(iii) Let and consider equation where is time-independent satisfying for all , where is a positive constant. Consider the one-step method given by

Use part (ii) to show that for this method we have that equation (††) holds (with a potentially different constant ) for .

Paper 1, Section I,

(a) Let be a convex function for each . Show that

are both convex functions.

(b) Fix . Show that if is convex, then given by is convex.

(c) Fix vectors . Let be given by

Show that is convex. [You may use any result from the course provided you state it.]

Paper 1, Section II, C

Consider a quantum mechanical particle of mass in a one-dimensional stepped potential well given by:

where and are constants.

(i) Show that all energy levels of the particle are non-negative. Show that any level with satisfies

where

(ii) Suppose that initially and the particle is in the ground state of the potential well. is then changed to a value (while the particle's wavefunction stays the same) and the energy of the particle is measured. For , give an expression in terms of for prob , the probability that the energy measurement will find the particle having energy . The expression may be left in terms of integrals that you need not evaluate.

Paper 1, Section I, H

Let be i.i.d. Bernoulli random variables, where and is unknown.

(a) What does it mean for a statistic to be sufficient for ? Find such a sufficient statistic .

(b) State and prove the Rao-Blackwell theorem.

(c) By considering the estimator of , find an unbiased estimator of that is a function of the statistic found in part (a), and has variance strictly smaller than that of .

Paper 1, Section II, H

(a) Show that if are independent random variables with common distribution, then . [Hint: If then if and otherwise.]

(b) Show that if then .

(c) State the Neyman-Pearson lemma.

(d) Let be independent random variables with common density proportional to for . Find a most powerful test of size of against , giving the critical region in terms of a quantile of an appropriate gamma distribution. Find a uniformly most powerful test of size of against .

Paper 1, Section I, D

Let be a bounded region of , with boundary . Let be a smooth function defined on , subject to the boundary condition that on and the normalization condition that

Let be the functional

Show that has a stationary value, subject to the stated boundary and normalization conditions, when satisfies a partial differential equation of the form

in , where is a constant.

Determine how is related to the stationary value of the functional . Hint: Consider .]