Part IB, 2003, Paper 2

Part IB, 2003, Paper 2

Jump to course

2.I.1F

Explain what it means for a function to be differentiable at a point . Show that if the partial derivatives and exist in a neighbourhood of and are continuous at then is differentiable at .

Let

and . Do the partial derivatives of exist at Is differentiable at Justify your answers.

2.II.10F

Let be the space of real matrices. Show that the function

(where denotes the usual Euclidean norm on ) defines a norm on . Show also that this norm satisfies for all and , and that if then all entries of have absolute value less than . Deduce that any function such that is a polynomial in the entries of is continuously differentiable.

Now let be the mapping sending a matrix to its determinant. By considering as a polynomial in the entries of , show that the derivative is the function . Deduce that, for any is the mapping , where is the adjugate of , i.e. the matrix of its cofactors.

[Hint: consider first the case when is invertible. You may assume the results that the set of invertible matrices is open in and that its closure is the whole of , and the identity .]

2.I.7B

(a) Using the residue theorem, evaluate

(b) Deduce that

2.II.16B

(a) Show that if satisfies the equation

where is a constant, then its Fourier transform satisfies the same equation, i.e.

(b) Prove that, for each , there is a polynomial of degree , unique up to multiplication by a constant, such that

is a solution of for some .

(c) Using the fact that satisfies for some constant , show that the Fourier transform of has the form

where is also a polynomial of degree .

(d) Deduce that the are eigenfunctions of the Fourier transform operator, i.e. for some constants

2.II.13E

(a) Let be defined by and let be the image of . Prove that is compact and path-connected. [Hint: you may find it helpful to set

(b) Let be defined by , let be the image of and let be the closed unit . Prove that is connected. Explain briefly why it is not path-connected.

2.I.6E

Let be distinct real numbers. For each let be the vector . Let be the matrix with rows and let be a column vector of size . Prove that if and only if . Deduce that the vectors .

[You may use general facts about matrices if you state them clearly.]

2.II.15E

(a) Let be an matrix and for each let be the matrix formed by the first columns of . Suppose that . Explain why the nullity of is non-zero. Prove that if is minimal such that has non-zero nullity, then the nullity of is 1 .

(b) Suppose that no column of consists entirely of zeros. Deduce from (a) that there exist scalars (where is defined as in (a)) such that for every , but whenever are distinct real numbers there is some such that .

(c) Now let and be bases for the same real dimensional vector space. Let be distinct real numbers such that for every the vectors are linearly dependent. For each , let be scalars, not all zero, such that . By applying the result of (b) to the matrix , deduce that .

(d) It follows that the vectors are linearly dependent for at most values of . Explain briefly how this result can also be proved using determinants.

2.I.2C

Explain briefly why the second-rank tensor

is isotropic, where is the surface of the unit sphere centred on the origin.

A second-rank tensor is defined by

where is the surface of the unit sphere centred on the origin. Calculate in the form

where and are to be determined.

By considering the action of on and on vectors perpendicular to , determine the eigenvalues and associated eigenvectors of .

2.II.11C

State the transformation law for an th-rank tensor .

Show that the fourth-rank tensor

is isotropic for arbitrary scalars and .

The stress and strain in a linear elastic medium are related by

Given that is symmetric and that the medium is isotropic, show that the stress-strain relationship can be written in the form

Show that can be written in the form , where is a traceless tensor and is a scalar to be determined. Show also that necessary and sufficient conditions for the stored elastic energy density to be non-negative for any deformation of the solid are that

2.I.5B

Let

Find the LU factorization of the matrix and use it to solve the system .

2.II.14B

Let

be an approximation of the second derivative which is exact for , the set of polynomials of degree , and let

be its error.

(a) Determine the coefficients .

(b) Using the Peano kernel theorem prove that, for , the set of threetimes continuously differentiable functions, the error satisfies the inequality

2.I.8G

Let be a finite-dimensional real vector space and a positive definite symmetric bilinear form on . Let be a linear map such that for all and in . Prove that if is invertible, then the dimension of must be even. By considering the restriction of to its image or otherwise, prove that the rank of is always even.

2.II.17G

Let be the set of all complex matrices which are hermitian, that is, , where .

(a) Show that is a real 4-dimensional vector space. Consider the real symmetric bilinear form on this space defined by

Prove that and , where denotes the identity matrix.

(b) Consider the three matrices

Prove that the basis of diagonalizes . Hence or otherwise find the rank and signature of .

(c) Let be the set of all complex matrices which satisfy . Show that is a real 4-dimensional vector space. Given , put

Show that takes values in and is a linear isomorphism between and .

(d) Define a real symmetric bilinear form on by setting , . Show that for all . Find the rank and signature of the symmetric bilinear form defined on .

2.I.9A

What is meant by the statement than an operator is hermitian?

A particle of mass moves in the real potential in one dimension. Show that the Hamiltonian of the system is hermitian.

Show that

where is the momentum operator and denotes the expectation value of the operator .

2.II.18A

A particle of mass and energy moving in one dimension is incident from the left on a potential barrier given by

with .

In the limit with held fixed, show that the transmission probability is

2.I.3H

Let be a random sample from the distribution, and suppose that the prior distribution for is , where are known. Determine the posterior distribution for , given , and the best point estimate of under both quadratic and absolute error loss.

2.II.12H

An examination was given to 500 high-school students in each of two large cities, and their grades were recorded as low, medium, or high. The results are given in the table below.

\begin{tabular}{l|ccc} & Low & Medium & High \ \hline City A & 103 & 145 & 252 \ City B & 140 & 136 & 224 \end{tabular}

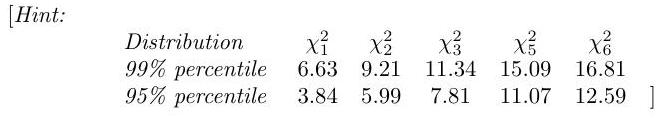

Derive carefully the test of homogeneity and test the hypothesis that the distributions of scores among students in the two cities are the same.