Part IB, 2019, Paper 4

Part IB, 2019, Paper 4

Jump to course

Paper 4, Section I, E

Let . What does it mean to say that a sequence of real-valued functions on is uniformly convergent?

(i) If a sequence of real-valued functions on converges uniformly to , and each is continuous, must also be continuous?

(ii) Let . Does the sequence converge uniformly on ?

(iii) If a sequence of real-valued functions on converges uniformly to , and each is differentiable, must also be differentiable?

Give a proof or counterexample in each case.

Paper 4, Section II, E

(a) (i) Show that a compact metric space must be complete.

(ii) If a metric space is complete and bounded, must it be compact? Give a proof or counterexample.

(b) A metric space is said to be totally bounded if for all , there exists and such that

(i) Show that a compact metric space is totally bounded.

(ii) Show that a complete, totally bounded metric space is compact.

[Hint: If is Cauchy, then there is a subsequence such that

(iii) Consider the space of continuous functions , with the metric

Is this space compact? Justify your answer.

Paper 4, Section I,

State the Cauchy Integral Formula for a disc. If is a holomorphic function such that for all , show using the Cauchy Integral Formula that is constant.

Paper 4, Section II, D

(a) Using the Bromwich contour integral, find the inverse Laplace transform of .

The temperature of mercury in a spherical thermometer bulb obeys the radial heat equation

with unit diffusion constant. At the mercury is at a uniform temperature equal to that of the surrounding air. For the surrounding air temperature lowers such that at the edge of the thermometer bulb

where is a constant.

(b) Find an explicit expression for .

(c) Show that the temperature of the mercury at the centre of the thermometer bulb at late times is

[You may assume that the late time behaviour of is determined by the singular part of at

Paper 4, Section I, A

Write down Maxwell's Equations for electric and magnetic fields and in the absence of charges and currents. Show that there are solutions of the form

if and satisfy a constraint and if and are then chosen appropriately.

Find the solution with , where is real, and . Compute the Poynting vector and state its physical significance.

Paper 4, Section II, C

The linear shallow-water equations governing the motion of a fluid layer in the neighbourhood of a point on the Earth's surface in the northern hemisphere are

where and are the horizontal velocity components and is the perturbation of the height of the free surface.

(a) Explain the meaning of the three positive constants and appearing in the equations above and outline the assumptions made in deriving these equations.

(b) Show that , the -component of vorticity, satisfies

and deduce that the potential vorticity

satisfies

(c) Consider a steady geostrophic flow that is uniform in the latitudinal direction. Show that

Given that the potential vorticity has the piecewise constant profile

where and are constants, and that as , solve for and in terms of the Rossby radius . Sketch the functions and in the case .

Paper 4, Section II, E

Let be the upper-half plane with hyperbolic metric . Define the group , and show that it acts by isometries on . [If you use a generation statement you must carefully state it.]

(a) Prove that acts transitively on the collection of pairs , where is a hyperbolic line in and .

(b) Let be the imaginary half-axis. Find the isometries of which fix pointwise. Hence or otherwise find all isometries of .

(c) Describe without proof the collection of all hyperbolic lines which meet with (signed) angle . Explain why there exists a hyperbolic triangle with angles and whenever .

(d) Is this triangle unique up to isometry? Justify your answer. [You may use without proof the fact that Möbius maps preserve angles.]

Paper 4, Section I, G

Let be a group and a subgroup.

(a) Define the normaliser .

(b) Suppose that and is a Sylow -subgroup of . Using Sylow's second theorem, prove that .

Paper 4, Section II, G

(a) Define the Smith Normal Form of a matrix. When is it guaranteed to exist?

(b) Deduce the classification of finitely generated abelian groups.

(c) How many conjugacy classes of matrices are there in with minimal polynomial

Paper 4, Section I, F

What is an eigenvalue of a matrix ? What is the eigenspace corresponding to an eigenvalue of ?

Consider the matrix

for a non-zero vector. Show that has rank 1 . Find the eigenvalues of and describe the corresponding eigenspaces. Is diagonalisable?

Paper 4, Section II, F

If is a finite-dimensional real vector space with inner product , prove that the linear map given by is an isomorphism. [You do not need to show that it is linear.]

If and are inner product spaces and is a linear map, what is meant by the adjoint of ? If is an orthonormal basis for is an orthonormal basis for , and is the matrix representing in these bases, derive a formula for the matrix representing in these bases.

Prove that .

If then the linear equation has no solution, but we may instead search for a minimising , known as a least-squares solution. Show that is such a least-squares solution if and only if it satisfies . Hence find a least-squares solution to the linear equation

Paper 4, Section I, H

For a Markov chain on a state space with , we let for be the probability that when .

(a) Let be a Markov chain. Prove that if is recurrent at a state , then . [You may use without proof that the number of returns of a Markov chain to a state when starting from has the geometric distribution.]

(b) Let and be independent simple symmetric random walks on starting from the origin 0 . Let . Prove that and deduce that . [You may use without proof that for all and , and that is recurrent at 0.]

Paper 4, Section I, D

Let

By considering the integral , where is a smooth, bounded function that vanishes sufficiently rapidly as , identify in terms of a generalized function.

Paper 4, Section II, B

(a) Show that the operator

where and are real functions, is self-adjoint (for suitable boundary conditions which you need not state) if and only if

(b) Consider the eigenvalue problem

on the interval with boundary conditions

Assuming that is everywhere negative, show that all eigenvalues are positive.

(c) Assume now that and that the eigenvalue problem (*) is on the interval with . Show that is an eigenvalue provided that

and show graphically that this condition has just one solution in the range .

[You may assume that all eigenfunctions are either symmetric or antisymmetric about

Paper 4, Section II, G

(a) Define the subspace, quotient and product topologies.

(b) Let be a compact topological space and a Hausdorff topological space. Prove that a continuous bijection is a homeomorphism.

(c) Let , equipped with the product topology. Let be the smallest equivalence relation on such that and , for all . Let

equipped with the subspace topology from . Prove that and are homeomorphic.

[You may assume without proof that is compact.]

Paper 4, Section I, C

Calculate the factorization of the matrix

Use this to evaluate and to solve the equation

with

Paper 4, Section II, H

(a) State and prove the max-flow min-cut theorem.

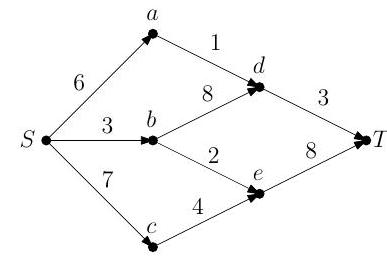

(b) (i) Apply the Ford-Fulkerson algorithm to find the maximum flow of the network illustrated below, where is the source and is the sink.

(ii) Verify the optimality of your solution using the max-flow min-cut theorem.

(iii) Is there a unique flow which attains the maximum? Explain your answer.

(c) Prove that the Ford-Fulkerson algorithm always terminates when the network is finite, the capacities are integers, and the algorithm is initialised where the initial flow is 0 across all edges. Prove also in this case that the flow across each edge is an integer.

Paper 4, Section I, B

(a) Define the probability density and probability current for the wavefunction of a particle of mass . Show that

and deduce that for a normalizable, stationary state wavefunction. Give an example of a non-normalizable, stationary state wavefunction for which is non-zero, and calculate the value of .

(b) A particle has the instantaneous, normalized wavefunction

where is positive and is real. Calculate the expectation value of the momentum for this wavefunction.

Paper 4, Section II, 19H

Consider the linear model

where are known and are i.i.d. . We assume that the parameters and are unknown.

(a) Find the MLE of . Explain why is the same as the least squares estimator of .

(b) State and prove the Gauss-Markov theorem for this model.

(c) For each value of with , determine the unbiased linear estimator of which minimizes

Paper 4, Section II, A

Consider the functional

where is subject to boundary conditions as with . [You may assume the integral converges.]

(a) Find expressions for the first-order and second-order variations and resulting from a variation that respects the boundary conditions.

(b) If , show that if and only if for all . Explain briefly how this is consistent with your results for and in part (a).

(c) Now suppose that with . By considering an integral of , show that

with equality if and only if satisfies a first-order differential equation. Deduce that global minima of with the specified boundary conditions occur precisely for

where is a constant. How is the first-order differential equation that appears in this case related to your general result for in part (a)?