Part II, 2015, Paper 4

Part II, 2015, Paper 4

Jump to course

Paper 4, Section II, F

(i) Explain how a linear system on a curve may induce a morphism from to projective space. What condition on the linear system is necessary to yield a morphism such that the pull-back of a hyperplane section is an element of the linear system? What condition is necessary to imply the morphism is an embedding?

(ii) State the Riemann-Roch theorem for curves.

(iii) Show that any divisor of degree 5 on a curve of genus 2 induces an embedding.

Paper 4, Section II, H

State the Mayer-Vietoris theorem for a simplicial complex which is the union of two subcomplexes and . Explain briefly how the connecting homomorphism is defined.

If is the union of subcomplexes , with , such that each intersection

is either empty or has the homology of a point, then show that

Construct examples for each showing that this is sharp.

Paper 4, Section II,

Let be a Bravais lattice with basis vectors . Define the reciprocal lattice and write down basis vectors for in terms of the basis for .

A finite crystal consists of identical atoms at sites of given by

A particle of mass scatters off the crystal; its wavevector is before scattering and after scattering, with . Show that the scattering amplitude in the Born approximation has the form

where is the potential due to a single atom at the origin and depends on the crystal structure. [You may assume that in the Born approximation the amplitude for scattering off a potential is where tilde denotes the Fourier transform.]

Derive an expression for that is valid when . Show also that when is a reciprocal lattice vector is equal to the total number of atoms in the crystal. Comment briefly on the significance of these results.

Now suppose that is a face-centred-cubic lattice:

where is a constant. Show that for a particle incident with , enhanced scattering is possible for at least two values of the scattering angle, and , related by

Paper 4, Section II, K

(i) Let be a Markov chain on and . Let be the hitting time of and denote the total time spent at by the chain before hitting . Show that if , then

(ii) Define the Moran model and show that if is the number of individuals carrying allele at time and is the fixation time of allele , then

Show that conditionally on fixation of an allele being present initially in individuals,

Paper 4, Section II, C

Consider the ordinary differential equation

where

and are constants. Look for solutions in the asymptotic form

and determine in terms of , as well as in terms of .

Deduce that the Bessel equation

where is a complex constant, has two solutions of the form

and determine and in terms of

Can the above asymptotic expansions be valid for all , or are they valid only in certain domains of the complex -plane? Justify your answer briefly.

Paper 4, Section I, D

A triatomic molecule is modelled by three masses moving in a line while connected to each other by two identical springs of force constant as shown in the figure.

(a) Write down the Lagrangian and derive the equations describing the motion of the atoms.

(b) Find the normal modes and their frequencies. What motion does the lowest frequency represent?

Paper 4, Section II, C

Consider a rigid body with angular velocity , angular momentum and position vector , in its body frame.

(a) Use the expression for the kinetic energy of the body,

to derive an expression for the tensor of inertia of the body, I. Write down the relationship between and .

(b) Euler's equations of torque-free motion of a rigid body are

Working in the frame of the principal axes of inertia, use Euler's equations to show that the energy and the squared angular momentum are conserved.

(c) Consider a cuboid with sides and , and with mass distributed uniformly.

(i) Use the expression for the tensor of inertia derived in (a) to calculate the principal moments of inertia of the body.

(ii) Assume and , and suppose that the initial conditions are such that

with the initial angular velocity perpendicular to the intermediate principal axis . Derive the first order differential equation for in terms of and and hence determine the long-term behaviour of .

Paper 4, Section I, G

Explain how to construct binary Reed-Muller codes. State and prove a result giving the minimum distance for each such Reed-Muller code.

Paper 4, Section I, C

Calculate the total effective number of relativistic spin states present in the early universe when the temperature is if there are three species of low-mass neutrinos and antineutrinos in addition to photons, electrons and positrons. If the weak interaction rate is and the expansion rate of the universe is , where is the total density of the universe, calculate the temperature at which the neutrons and protons cease to interact via weak interactions, and show that .

State the formula for the equilibrium ratio of neutrons to protons at , and briefly describe the sequence of events as the temperature falls from to the temperature at which the nucleosynthesis of helium and deuterium ends.

What is the effect of an increase or decrease of on the abundance of helium-4 resulting from nucleosynthesis? Why do changes in have a very small effect on the final abundance of deuterium?

Paper 4, Section II, G

Let denote the set of unitary complex matrices. Show that is a smooth (real) manifold, and find its dimension. [You may use any general results from the course provided they are stated correctly.] For any matrix in and an complex matrix, determine when represents a tangent vector to at .

Consider the tangent spaces to equipped with the metric induced from the standard (Euclidean) inner product on the real vector space of complex matrices, given by , where denotes the real part and denotes the conjugate transpose of . Suppose that represents a tangent vector to at the identity matrix . Sketch an explicit construction of a geodesic curve on passing through and with tangent direction , giving a brief proof that the acceleration of the curve is always orthogonal to the tangent space to .

[Hint: You will find it easier to work directly with complex matrices, rather than the corresponding real matrices.]

Paper 4, Section II, B

Let be a continuous one-dimensional map of an interval . Explain what is meant by the statements (i) that has a horseshoe and (ii) that is chaotic (according to Glendinning's definition).

Assume that has a 3-cycle with and, without loss of generality, . Prove that has a horseshoe. [You may assume the intermediate value theorem.]

Represent the effect of on the intervals and by means of a directed graph, explaining carefully how the graph is constructed. Explain what feature of the graph implies the existence of a 3-cycle.

The map has a 5-cycle with and , and . For which , is an -cycle of guaranteed to exist? Is guaranteed to be chaotic? Is guaranteed to have a horseshoe? Justify your answers. [You may use a suitable directed graph as part of your arguments.]

How do your answers to the above change if instead ?

Paper 4, Section II, A

A point particle of charge has trajectory in Minkowski space, where is its proper time. The resulting electromagnetic field is given by the Liénard-Wiechert 4-potential

Write down the condition that determines the point on the trajectory of the particle for a given value of . Express this condition in terms of components, setting and , and define the retarded time .

Suppose that the 3 -velocity of the particle is small in size compared to , and suppose also that . Working to leading order in and to first order in , show that

Now assume that can be replaced by in the expressions for and above. Calculate the electric and magnetic fields to leading order in and hence show that the Poynting vector is (in this approximation)

If the charge is performing simple harmonic motion , where is a unit vector and , find the total energy radiated during one period of oscillation.

Paper 4, Section II, E

A stationary inviscid fluid of thickness and density is located below a free surface at and above a deep layer of inviscid fluid of the same density in flowing with uniform velocity in the direction. The base velocity profile is thus

while the free surface at is maintained flat by gravity.

By considering small perturbations of the vortex sheet at of the form , calculate the dispersion relationship between and in the irrotational limit. By explicitly deriving that

deduce that the vortex sheet is unstable at all wavelengths. Show that the growth rates of the unstable modes are approximately when and when .

Paper 4, Section I, B

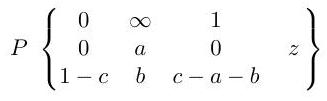

Explain how the Papperitz symbol

represents a differential equation with certain properties. [You need not write down the differential equation explicitly.]

The hypergeometric function is defined to be the solution of the equation given by the Papperitz symbol

that is analytic at and such that . Show that

indicating clearly any general results for manipulating Papperitz symbols that you use.

Paper 4, Section II,

(i) Prove that a finite solvable extension of fields of characteristic zero is a radical extension.

(ii) Let be variables, , and where are the elementary symmetric polynomials in the variables . Is there an element such that but ? Justify your answer.

(iii) Find an example of a field extension of degree two such that for any . Give an example of a field which has no extension containing an primitive root of unity.

Paper 4, Section II, D

In static spherically symmetric coordinates, the metric for de Sitter space is given by

where and is a constant.

(a) Let for . Use the coordinates to show that the surface is non-singular. Is a space-time singularity?

(b) Show that the vector field is null.

(c) Show that the radial null geodesics must obey either

Which of these families of geodesics is outgoing

Sketch these geodesics in the plane for , where the -axis is horizontal and lines of constant are inclined at to the horizontal.

(d) Show, by giving an explicit example, that an observer moving on a timelike geodesic starting at can cross the surface within a finite proper time.

Paper 4, Section II, I

Let be a bipartite graph with vertex classes and . What does it mean to say that contains a matching from to ? State and prove Hall's Marriage Theorem.

Suppose now that every has , and that if and with then . Show that contains a matching from to .

Paper 4, Section II, G

Let be a Hilbert space and . Define what is meant by an adjoint of and prove that it exists, it is linear and bounded, and that it is unique. [You may use the Riesz Representation Theorem without proof.]

What does it mean to say that is a normal operator? Give an example of a bounded linear map on that is not normal.

Show that is normal if and only if for all .

Prove that if is normal, then , that is, that every element of the spectrum of is an approximate eigenvalue of .

Paper 4, Section II, I

State the Axiom of Foundation and the Principle of -Induction, and show that they are equivalent (in the presence of the other axioms of ). [You may assume the existence of transitive closures.]

Explain briefly how the Principle of -Induction implies that every set is a member of some .

Find the ranks of the following sets:

(i) ,

(ii) the Cartesian product ,

(iii) the set of all functions from to .

[You may assume standard properties of rank.]

Paper 4, Section I, E

(i) A variant of the classic logistic population model is given by the HutchinsonWright equation

where . Determine the condition on (in terms of ) for the constant solution to be stable.

(ii) Another variant of the logistic model is given by the equation

where . Give a brief interpretation of what this model represents.

Determine the condition on (in terms of ) for the constant solution to be stable in this model.

Paper 4, Section II, E

In a stochastic model of multiple populations, is the probability that the population sizes are given by the vector at time . The jump rate is the probability per unit time that the population sizes jump from to . Under suitable assumptions, the system may be approximated by the multivariate Fokker-Planck equation (with summation convention)

where and matrix elements .

(a) Use the multivariate Fokker-Planck equation to show that

[You may assume that as .]

(b) For small fluctuations, you may assume that the vector may be approximated by a linear function in and the matrix may be treated as constant, i.e. and (where and are constants). Show that at steady state the covariances satisfy

(c) A lab-controlled insect population consists of larvae and adults. Larvae are added to the system at rate . Larvae each mature at rate per capita. Adults die at rate per capita. Give the vector and matrix for this model. Show that at steady state

(d) Find the variance of each population size near steady state, and show that the covariance between the populations is zero.

Paper 4, Section II, H

Let be a number field. State Dirichlet's unit theorem, defining all the terms you use, and what it implies for a quadratic field , where is a square-free integer.

Find a fundamental unit of .

Find all integral solutions of the equation .

Paper 4, Section I, H

Show that if is prime then must be a power of 2 . Now assuming is a power of 2 , show that if is a prime factor of then .

Explain the method of Fermat factorization, and use it to factor .

Paper 4, Section II, H

State the Chinese Remainder Theorem.

Let be an odd positive integer. Define the Jacobi symbol . Which of the following statements are true, and which are false? Give a proof or counterexample as appropriate.

(i) If then the congruence is soluble.

(ii) If is not a square then .

(iii) If is composite then there exists an integer a coprime to with

(iv) If is composite then there exists an integer coprime to with

Paper 4, Section II, E

(a) Define the th Krylov space for and . Letting be the dimension of , prove the following results.

(i) There exists a positive integer such that for and for .

(ii) If , where are eigenvectors of for distinct eigenvalues and all are nonzero, then .

(b) Define the term residual in the conjugate gradient (CG) method for solving a system with symmetric positive definite . Explain (without proof) the connection to Krylov spaces and prove that for any right-hand side the CG method finds an exact solution after at most steps, where is the number of distinct eigenvalues of . [You may use without proof known properties of the iterates of the CG method.]

Define what is meant by preconditioning, and explain two ways in which preconditioning can speed up convergence. Can we choose the preconditioner so that the CG method requires only one step? If yes, is it a reasonable method for speeding up the computation?

Paper 4, Section II,

Consider the scalar system evolving as

where is a white noise sequence with and . It is desired to choose controls to minimize . Show that for the minimal cost is .

Find a constant and a function which solve

Let be the class of those policies for which every obeys the constraint . Show that , for all . Find, and prove optimal, a policy which over all minimizes

Paper 4, Section II, E

(a) Show that the Cauchy problem for satisfying

with initial data , which is a smooth -periodic function of , defines a strongly continuous one parameter semi-group of contractions on the Sobolev space for any .

(b) Solve the Cauchy problem for the equation

with , where are smooth -periodic functions of , and show that the solution is smooth. Prove from first principles that the solution satisfies the property of finite propagation speed.

[In this question all functions are real-valued, and

are the Sobolev spaces of functions which are -periodic in , for

Paper 4, Section II, A

The Hamiltonian for a quantum system in the Schrödinger picture is , where is independent of time and the parameter is small. Define the interaction picture corresponding to this Hamiltonian and derive a time evolution equation for interaction picture states.

Suppose that and are eigenstates of with distinct eigenvalues and , respectively. Show that if the system is in state at time zero then the probability of measuring it to be in state at time is

Let be the Hamiltonian for an isotropic three-dimensional harmonic oscillator of mass and frequency , with being the ground state wavefunction (where ) and being wavefunctions for the states at the first excited energy level . The oscillator is in its ground state at when a perturbation

is applied, with , and is then measured after a very large time has elapsed. Show that to first order in perturbation theory the oscillator will be found in one particular state at the first excited energy level with probability

but that the probability that it will be found in either of the other excited states is zero (to this order).

You may use the fact that

Paper 4, Section II,

Given independent and identically distributed observations with finite mean and variance , explain the notion of a bootstrap sample , and discuss how you can use it to construct a confidence interval for .

Suppose you can operate a random number generator that can simulate independent uniform random variables on . How can you use such a random number generator to simulate a bootstrap sample?

Suppose that and are cumulative probability distribution functions defined on the real line, that as for every , and that is continuous on . Show that, as ,

State (without proof) the theorem about the consistency of the bootstrap of the mean, and use it to give an asymptotic justification of the confidence interval . That is, prove that as where is the joint distribution of

[You may use standard facts of stochastic convergence and the Central Limit Theorem without proof.]

Paper 4, Section II, J

(a) State Fatou's lemma.

(b) Let be a random variable on and let be a sequence of random variables on . What does it mean to say that weakly?

State and prove the Central Limit Theorem for i.i.d. real-valued random variables. [You may use auxiliary theorems proved in the course provided these are clearly stated.]

(c) Let be a real-valued random variable with characteristic function . Let be a sequence of real numbers with and . Prove that if we have

then

Paper 4, Section II, F

(a) Let be the circle group. Assuming any required facts about continuous functions from real analysis, show that every 1-dimensional continuous representation of is of the form

for some .

(b) Let , and let be a continuous representation of on a finitedimensional vector space .

(i) Define the character of , and show that .

(ii) Show that .

(iii) Let be the irreducible 4-dimensional representation of . Decompose into irreducible representations. Hence decompose the exterior square into irreducible representations.

Paper 4, Section I, J

Data on 173 nesting female horseshoe crabs record for each crab its colour as one of 4 factors (simply labelled ), its width (in ) and the presence of male crabs nearby (a 1 indicating presence). The data are collected into the data frame crabs and the first few lines are displayed below.

Describe the model being fitted by the command below.

fit1 <- glm(males colour + width, family = binomial, data=crabs)

The following (abbreviated) output is obtained from the summary command.

Write out the calculation for an approximate confidence interval for the coefficient for width. Describe the calculation you would perform to obtain an estimate of the probability that a female crab of colour 3 and with a width of has males nearby. [You need not actually compute the end points of the confidence interval or the estimate of the probability above, but merely show the calculations that would need to be performed in order to arrive at them.]

Paper 4, Section II, J

Consider the normal linear model where the -vector of responses satisfies with . Here is an matrix of predictors with full column rank where and is an unknown vector of regression coefficients. For , denote the th column of by , and let be with its th column removed. Suppose where is an -vector of 1 's. Denote the maximum likelihood estimate of by . Write down the formula for involving , the orthogonal projection onto the column space of .

Consider with . By thinking about the orthogonal projection of onto , show that

[You may use standard facts about orthogonal projections including the fact that if and are subspaces of with a subspace of and and denote orthogonal projections onto and respectively, then for all .]

By considering the fitted values , explain why if, for any , a constant is added to each entry in the th column of , then will remain unchanged. Let . Why is (*) also true when all instances of and are replaced by and respectively?

The marks from mid-year statistics and mathematics tests and an end-of-year statistics exam are recorded for 100 secondary school students. The first few lines of the data are given below.

The following abbreviated output is obtained:

What are the hypothesis tests corresponding to the final column of the coefficients table? What is the hypothesis test corresponding to the final line of the output? Interpret the results when testing at the level.

How does the following sample correlation matrix for the data help to explain the relative sizes of some of the -values?

Paper 4, Section II, C

The Ising model consists of particles, labelled by , arranged on a -dimensional Euclidean lattice with periodic boundary conditions. Each particle has spin up , or down , and the energy in the presence of a magnetic field is

where is a constant and indicates that the second sum is over each pair of nearest neighbours (every particle has nearest neighbours). Let , where is the temperature.

(i) Express the average spin per particle, , in terms of the canonical partition function .

(ii) Show that in the mean-field approximation

where is a single-particle partition function, is an effective magnetic field which you should find in terms of and , and is a prefactor which you should also evaluate.

(iii) Deduce an equation that determines for general values of and temperature . Without attempting to solve for explicitly, discuss how the behaviour of the system depends on temperature when , deriving an expression for the critical temperature and explaining its significance.

(iv) Comment briefly on whether the results obtained using the mean-field approximation for are consistent with an expression for the free energy of the form

where and are positive constants.

Paper 4, Section II,

(i) An investor in a single-period market with time- 0 wealth may generate any time-1 wealth of the form , where is any element of a vector space of random variables. The investor's objective is to maximize , where is strictly increasing, concave and . Define the utility indifference price of a random variable .

Prove that the map is concave. [You may assume that any supremum is attained.]

(ii) Agent has utility . The agents may buy for time- 0 price a risky asset which will be worth at time 1 , where is random and has density

Assuming zero interest, prove that agent will optimally choose to buy

units of the risky asset at time 0 .

If the asset is in unit net supply, if , and if , prove that the market for the risky asset will clear at price

What happens if

Paper 4, Section I,

Let be the set of all non-empty compact subsets of -dimensional Euclidean space . Define the Hausdorff metric on , and prove that it is a metric.

Let be a sequence in . Show that is also in and that as in the Hausdorff metric.

Paper 4, Section II, 36B

The shallow-water equations

describe one-dimensional flow over a horizontal boundary with depth and velocity , where is the acceleration due to gravity.

Show that the Riemann invariants are constant along characteristics satisfying , where is the linear wave speed and denotes a reference state.

An initially stationary pool of fluid of depth is held between a stationary wall at and a removable barrier at . At the barrier is instantaneously removed allowing the fluid to flow into the region .

For , find and in each of the regions

explaining your argument carefully with a sketch of the characteristics in the plane.

For , show that the solution in region (ii) above continues to hold in the region . Explain why this solution does not hold in